Claude Opus 4.5 vs Sonnet 4.5: Which Should You Use?

Anthropic's flagship vs their best coding model

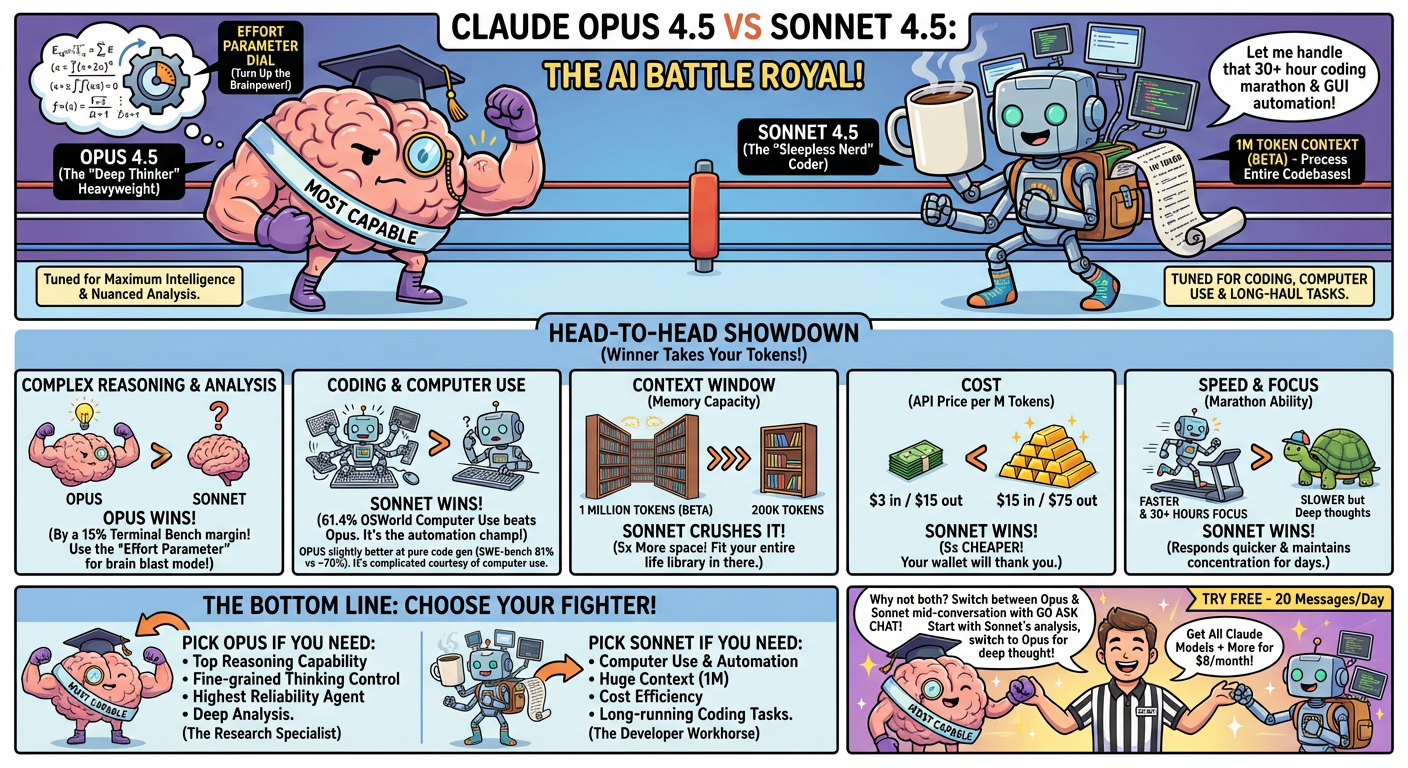

Both are solid, but they're tuned for different use cases. Opus 4.5 is Anthropic's most capable model overall, while Sonnet 4.5 is their strongest for coding and computer use.

Here's a quick breakdown to help you decide:

| Specification | Claude Opus 4.5 | Claude Sonnet 4.5 |

|---|---|---|

| Position | Most capable | Strong for coding/agents |

| Context Window | 200K tokens[1] | 1M tokens (beta)[1] |

| OSWorld (Computer Use) | Not primary focus | 61.4%[1] |

| SWE-bench Verified | 81%[2] | ~70%[2] |

| Terminal Bench | 15% better than Sonnet[1] | Baseline |

| API Pricing | $15/$75 per M tokens[3] | $3/$15 per M tokens[3] |

| Speed | Slower | Faster |

| Effort Parameter | Yes (unique) | No |

| Long Task Focus | Complex reasoning | 30+ hours on tasks[1] |

Opus 4.5 is Anthropic's most capable model. It's designed for complex reasoning, nuanced analysis, and tasks requiring deep understanding.

Strong Points:

- 81% SWE-bench Verified[2] — Highest coding benchmark among Claude models

- Effort Parameter[1] — Unique ability to control reasoning depth per request

- 50-75% Fewer Tool Errors[1] — More reliable for agentic workflows

- 15% Better on Terminal Bench[1] — Complex multi-step tasks

- Extended Thinking — Preserves reasoning across multi-turn conversations

Good For:

- Complex reasoning and analysis

- Tasks requiring maximum intelligence

- Agentic workflows where reliability matters

- Research and deep analysis

Sonnet 4.5 is Anthropic's strongest coding model and leads in computer use. It offers a 1M token context window (beta) and is tuned for developer workflows.

Strong Points:

- 61.4% OSWorld[1] — Strong computer use (up from 42.2% on Sonnet 4)

- 1M Token Context (Beta)[1] — Process entire codebases at once

- 5x Cheaper[3] — $3/$15 vs $15/$75 per million tokens

- 30+ Hours Focus[1] — Can maintain concentration on complex multi-step tasks

- 0% Edit Error Rate[1] — Down from 9% on Sonnet 4 (internal benchmark)

Good For:

- Coding and development

- Computer use and automation

- Processing large codebases (1M context)

- Long-running agentic tasks

- Cost-sensitive applications

Complex Reasoning — Winner: Opus 4.5

Opus is positioned as Anthropic's most capable model. It scores 15% higher than Sonnet on Terminal Bench for complex tasks,[1] and its unique effort parameter lets you dial up reasoning depth when needed.

Coding & Development — It's Complicated

Opus leads on SWE-bench Verified (81% vs ~70%),[2] but Sonnet dominates OSWorld computer use (61.4%)[1] and has the 1M context window for processing entire codebases. For pure code generation, Opus is slightly better. For agentic coding with computer use, Sonnet leads.

Context Window — Winner: Sonnet 4.5

Sonnet's 1M token context (beta) is much larger than Opus's 200K. If you're working with large codebases, long documents, or extensive conversation histories, Sonnet can handle 5x more information.

Speed — Winner: Sonnet 4.5

Sonnet is categorized as "slow" but is faster than Opus, which is also "slow." Neither is fast, but Sonnet responds quicker.

Cost — Winner: Sonnet 4.5

At $3/$15 per million tokens, Sonnet is 5x cheaper than Opus ($15/$75).[3] For high-volume applications, Sonnet is easier on cost.

Long-Running Tasks — Winner: Sonnet 4.5

Anthropic reports Sonnet can maintain focus for 30+ hours on complex multi-step tasks.[1] Combined with its 1M context, it fits long coding sessions.

Choose Opus 4.5 if you need:

- Top reasoning capability

- Fine-grained control over thinking depth (effort parameter)[1]

- Highest reliability for tool use (50-75% fewer errors)[1]

- Complex analysis and research tasks

Choose Sonnet 4.5 if you need:

- Computer use and automation (61.4% OSWorld)[1]

- Large context processing (1M tokens)[1]

- Cost efficiency (5x cheaper)[3]

- Long-running coding tasks (30+ hours)[1]

- General coding assistance

Opus and Sonnet cover different cases. Use Opus when you need maximum reasoning depth. Use Sonnet when you need large context, computer use, or cost efficiency.

With Go Ask Chat, you can switch between Opus and Sonnet mid-conversation. Start with Sonnet for code analysis (using its 1M context), switch to Opus for complex architectural decisions, then back to Sonnet for implementation.

Get All Claude Models + More

Access Opus, Sonnet, Haiku, plus GPT-5.2, Gemini, and 20 more models for $8/month.

Try Free - 20 Messages/DayNo. Opus is stronger at reasoning, but Sonnet leads in computer use, has 5x the context window, costs 5x less, and maintains focus longer on multi-step tasks. The right model depends on your specific task.

For pure code generation benchmarks, Opus leads (81% SWE-bench).[2] For agentic coding with computer use, Sonnet leads (61.4% OSWorld).[1] For processing large codebases, Sonnet's 1M context is essential. Most developers will find Sonnet sufficient and more practical.

Sonnet 4.5 was specifically optimized for developer workflows that require processing entire codebases. The 1M context (beta) reflects this focus. Opus prioritizes reasoning depth over context length.

Yes. Go Ask Chat includes Opus 4.5, Sonnet 4.5, Haiku 4.5, plus GPT, Gemini, Grok, and more. Switch between Claude models with one click, even mid-conversation.

- Anthropic - Claude model specifications, OSWorld benchmark, context windows, and performance metrics

- SWE-bench Leaderboard - Software engineering benchmark scores for AI models

- Anthropic Pricing - Claude API and Pro subscription pricing

- Go Ask Chat Pricing - Multi-model subscription pricing and features

† Specifications reflect information at time of publication. Verify current data at source links.