GPT-5.2 vs Claude Opus 4.5: Complete Comparison

OpenAI vs Anthropic - which flagship AI wins in 2026?

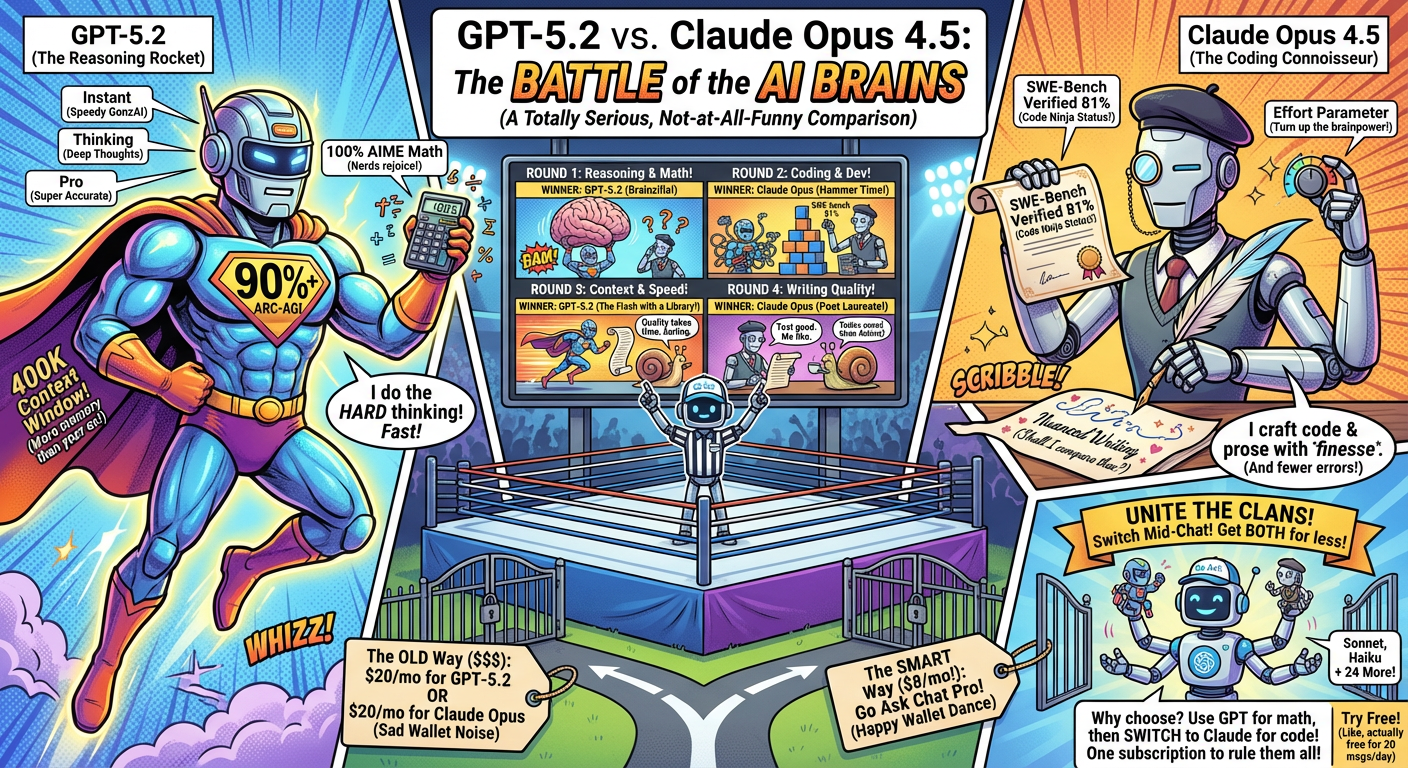

GPT-5.2 and Claude Opus 4.5 are the flagship models from OpenAI and Anthropic. Both represent major research investment, but they do different things well.

Here is the short version to help you choose between them, or use both.

| Specification | GPT-5.2 | Claude Opus 4.5 |

|---|---|---|

| Provider | OpenAI | Anthropic |

| Context Window | 400K tokens[3] | 200K tokens[4] |

| Output Tokens | 128K | 8K |

| ARC-AGI-1 | 90%+ (first ever)[5] | Not reported |

| AIME 2025 Math | 100%[3] | Not reported |

| SWE-Bench Verified | ~75% | 81%[6] |

| SWE-Bench Pro | 55.6%[3] | Not reported |

| Thinking Mode | Yes (Thinking variant) | Yes (effort parameter) |

| Speed | Fast (Instant mode) | Slower |

Released December 11, 2025, GPT-5.2 is OpenAI's latest model[3]. It's the first model to score above 90% on ARC-AGI-1[5] and achieves 100% on AIME 2025 math benchmarks[3].

Strong Points

- Reasoning - First model to break 90% on ARC-AGI[5], the benchmark designed to test general reasoning

- Math - Perfect 100% score on AIME 2025 mathematical reasoning

- Large Context - 400K token input allows processing entire codebases

- Speed Options - Instant mode for quick tasks, Thinking mode for complex reasoning, Pro mode for accuracy

- 55.6% SWE-Bench Pro[3] - Strong agentic coding performance

Variants Available

- GPT-5.2 (Instant) - Fast responses for everyday tasks

- GPT-5.2 (Thinking) - Deeper reasoning with thinking tokens

- GPT-5.2 Pro - Maximum accuracy for difficult questions

Released November 2025, Claude Opus 4.5 is Anthropic's most capable model[4]. It leads on SWE-bench Verified[6] and offers the effort parameter for controlling reasoning depth.

Strong Points

- Coding - 81% on SWE-bench Verified[6]

- Effort Parameter - Unique ability to control how much "thinking" the model does

- Fewer Tool Errors - 50-75% reduction in tool calling errors compared to competitors[4]

- Quality Writing - Known for nuanced, well-structured prose

- Instruction following - Strong at complex multi-step tasks

What Stands Out

- Effort Parameter - Control reasoning depth per request

- Extended Thinking - Preserved across multi-turn conversations

- 15% Better Than Sonnet - On Terminal Bench complex tasks

Reasoning & Problem Solving — Winner: GPT-5.2

GPT-5.2's ARC-AGI score of 90%+ is rare[5]. The model also achieves 100% on AIME 2025[3], showing very strong mathematical reasoning. For pure reasoning tasks, GPT-5.2 currently leads.

Coding & Development — Winner: Claude Opus 4.5

Claude Opus 4.5 leads on SWE-bench Verified (81%)[6], the standard benchmark for real-world coding ability. It also shows 50-75% fewer tool calling errors[4], which matters for agentic coding workflows. For day-to-day coding assistance, Opus has the edge.

Context Window & Memory — Winner: GPT-5.2

GPT-5.2's 400K context window is twice the size of Opus's 200K. For processing large codebases, long documents, or extended conversations, GPT-5.2 can handle more information at once.

Speed & Efficiency — Winner: GPT-5.2

GPT-5.2's Instant mode provides fast responses for everyday tasks. Opus 4.5 is categorized as "slow" - it prioritizes quality over speed. If you need quick answers, GPT-5.2 is faster.

Writing Quality — Winner: Claude Opus 4.5

Claude models are known for nuanced, well-structured writing. Opus 4.5 is strong at long-form content, creative writing, and maintaining consistent tone across documents.

Subscription Pricing

ChatGPT Plus: $20/month[1] - GPT-5.2 access

Claude Pro: $20/month[2] - Opus 4.5 access

Go Ask Chat Pro: $8/month[7] - Both GPT-5.2 AND Opus 4.5 + 26 more models

Both ChatGPT Plus and Claude Pro cost $20/month for access to a single AI family. Go Ask Chat gives you both for $8/month.

Choose GPT-5.2 if you need:

- Complex mathematical reasoning (100% AIME)

- General reasoning tasks (90%+ ARC-AGI)

- Large context processing (400K tokens)

- Fast responses (Instant mode)

Choose Claude Opus 4.5 if you need:

- Production coding assistance (81% SWE-bench)

- Fewer tool/API errors (50-75% reduction)

- High-quality writing

- Fine-grained reasoning control (effort parameter)

GPT-5.2 and Claude Opus 4.5 cover different cases. GPT is strong at reasoning and math. Claude is strong at coding and writing. Use both depending on the task.

With Go Ask Chat, you can switch between GPT-5.2 and Claude Opus mid-conversation. Start with GPT for reasoning, switch to Claude for implementation. One subscription, all models.

Use Both for $8/month

Get GPT-5.2, Claude Opus 4.5, and 26 more models. No separate subscriptions.

Try Free - 20 Messages/DayNeither is universally better. GPT-5.2 leads on reasoning benchmarks (ARC-AGI, AIME). Claude Opus 4.5 leads on coding benchmarks (SWE-bench). The right model depends on your task.

Claude Opus 4.5 edges out GPT-5.2 on SWE-bench Verified (81% vs ~75%) and has fewer tool calling errors. For production coding work, Opus has a slight advantage. However, GPT-5.2's larger context window (400K vs 200K) can be helpful for large codebases.

GPT-5.2 in Instant mode is faster than Claude Opus 4.5. Opus prioritizes quality over speed.

Yes. Go Ask Chat includes both GPT-5.2 and Claude Opus 4.5, plus Sonnet, Haiku, and 24 more models. Switch between them mid-conversation with one click.

- OpenAI ChatGPT Pricing

- Anthropic Claude Pricing

- OpenAI GPT-5.2 Announcement

- Anthropic Claude Opus 4.5 Announcement

- ARC Prize Leaderboard

- SWE-bench Leaderboard

- Go Ask Chat Pricing

† Specifications reflect announcements and benchmarks at time of publication. Verify current data at source links.