Gemini 3 Pro vs Claude Opus 4.5: Which AI Wins?

Google vs Anthropic - massive context vs coding mastery

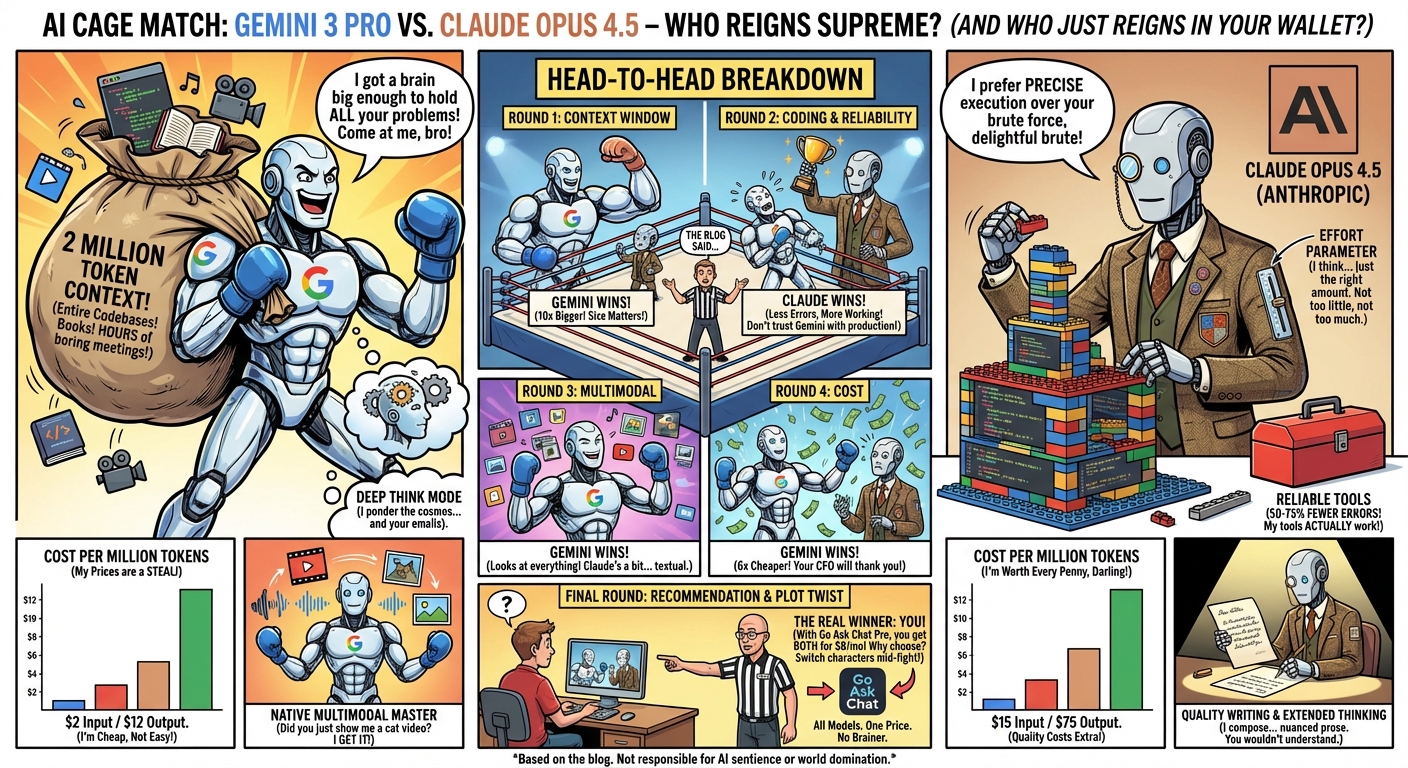

Gemini 3 Pro and Claude Opus 4.5 are the flagship models from Google and Anthropic. Gemini offers a 2M token context window.[1] Claude Opus leads in coding benchmarks and reliability. Here is the short version:

| Specification | Gemini 3 Pro | Claude Opus 4.5 |

|---|---|---|

| Provider | Anthropic | |

| Context Window | 2M tokens[1] | 200K tokens[2] |

| Output Tokens | 65K | 8K |

| SWE-bench Verified | ~70% | 81%[3] |

| Terminal-Bench 2.0 | 54.2% | Not reported |

| Deep Think Mode | Yes | Effort parameter |

| Multimodal | Native | Yes |

| API Pricing | $2/$12 per M tokens[4] | $15/$75 per M tokens[5] |

| Tool Calling Errors | Standard | 50-75% fewer[2] |

Released December 2025, Gemini 3 Pro is Google's flagship model. Its 2M token context window[1] is the largest currently available, and its native multimodal capabilities stand out.

Strong Points:

- 2M Token Context — Process entire codebases, book-length documents, hours of transcripts

- Deep Think Mode — Extended reasoning for complex problems

- 54.2% Terminal-Bench 2.0 — Strong tool use and terminal operation

- Native multimodal — Strong image, video, and audio understanding

- Generative Interfaces — New capability for visual layout and dynamic views

- $2/$12 pricing[4] — Cheaper than Opus at API level

What Stands Out:

- Gemini Agent — Orchestrates complex multi-step tasks

- Google ecosystem — Tight integration with Workspace, AI Studio, Vertex

- Agentic Coding — Strong performance on complex zero-shot coding tasks

Released November 2025, Claude Opus 4.5 is Anthropic's most capable model. It leads on coding benchmarks and offers the effort parameter for controlling reasoning depth.

Strong Points:

- 81% SWE-bench Verified[3] — Strong coding benchmark

- Effort Parameter — Control how much "thinking" per request

- 50-75% Fewer Tool Errors[2] — More reliable for agentic workflows

- Quality Writing — Known for nuanced, well-structured prose

- Extended Thinking — Preserved across multi-turn conversations

What Stands Out:

- Effort Control — Fine-tune reasoning depth per request

- Instruction following — Strong at complex multi-step tasks

- 15% Better Than Sonnet — On Terminal Bench complex tasks

Context Window — Winner: Gemini 3 Pro (10x larger)

Gemini's 2M tokens[1] vs Opus's 200K[2] is a big difference. Gemini can process entire codebases, book-length documents, or hours of meeting transcripts in a single context. For large-scale analysis, Gemini wins.

Coding & Development — Winner: Claude Opus 4.5

Opus leads on SWE-bench Verified (81% vs ~70%)[3] and has 50-75% fewer tool calling errors.[2] For production coding assistance and agentic workflows that require reliability, Opus has the edge.

Multimodal Understanding — Winner: Gemini 3 Pro

Gemini 3 Pro is built for multimodal tasks. It is strong at image understanding, video analysis, and audio processing. While Opus supports images and documents, Gemini's multimodal capabilities are stronger.

Reasoning — Tie (Different Approaches)

Gemini offers Deep Think mode for extended reasoning. Opus offers the effort parameter to control reasoning depth. Both can handle complex reasoning tasks, with slightly different interfaces.

Tool Use — Winner: It Depends

Gemini scores higher on Terminal-Bench 2.0 (54.2%) for tool use. But Opus has 50-75% fewer tool calling errors.[2] Gemini may attempt more, but Opus is more reliable when it acts.

Cost — Winner: Gemini 3 Pro (6x cheaper)

At $2/$12 per million tokens,[4] Gemini is cheaper than Opus at $15/$75.[5] For high-volume applications, Gemini costs less.

Choose Gemini 3 Pro if you need:

- Massive context (2M tokens for entire codebases)[1]

- Strong multimodal understanding (images, video, audio)

- Cost efficiency (6x cheaper than Opus)

- Google ecosystem integration

- Long document or video analysis

Choose Claude Opus 4.5 if you need:

- Highest coding accuracy (81% SWE-bench)[3]

- High reliability (50-75% fewer tool errors)[2]

- Fine-grained reasoning control (effort parameter)

- Quality long-form writing

- Reliable agentic workflows

Gemini and Opus do well at different tasks. Use Gemini for processing large documents and multimodal tasks. Use Opus for coding and reliability-critical workflows.

With Go Ask Chat, switch between Gemini 3 Pro and Claude Opus mid-conversation. Process a large codebase with Gemini's 2M context, then switch to Opus for reliable implementation. One subscription, all models.

Get Gemini + Claude for $8/month

Access Gemini 3 Pro, Claude Opus 4.5, plus GPT, Grok, and 24 more models.

Try Free - 20 Messages/DayClaude Opus 4.5 leads on coding benchmarks (81% SWE-bench)[3] and has fewer errors. However, Gemini's 2M context[1] lets you process entire codebases at once. For code generation accuracy, use Opus. For large-scale code analysis, use Gemini.

Gemini 3 Pro's 2M context[1] makes it better for analyzing large documents, research papers, or extensive datasets. For shorter, more focused analysis requiring maximum intelligence, Opus is strong.

Both are positioned as medium-speed models. Neither is optimized for speed. For fast responses, consider Gemini Flash or Claude Haiku.

Yes. Go Ask Chat includes Gemini 3 Pro, Gemini Flash, Claude Opus, Sonnet, Haiku, plus GPT, Grok, and more. Switch between models with one click.

- Google Gemini API Documentation - Gemini 3 Pro specifications and 2M token context window

- Anthropic Claude Opus 4.5 Announcement - 200K context, 50-75% fewer tool errors

- SWE-bench Verified Leaderboard - Claude Opus 4.5 at 81%

- Google AI Pricing - Gemini 3 Pro API and Gemini Advanced subscription

- Anthropic Pricing - Claude Opus 4.5 API and Claude Pro subscription

- Go Ask Chat Pricing - Pro plan at $8/month

† Specifications reflect announcements and benchmarks at time of publication. Verify current data at source links.